Pure Moderation is a global business process outsourcing vendor specializing in a range of services. Our priority is to provide affordable solutions that are custom-tailored to the individual needs of our partners. Working with us allows you to operate your business more efficiently. Whether your requirements involve small, time-based operations or large deployments, our team can quickly scale up or down to suit your needs. With office locations throughout Asia, Pure Moderation deploys various teams that provide support in multiple languages. Our clients range from small start-ups to global organizations – whatever size your business is, let us help you!

We’ve enjoyed an amazing partnership with Pure Moderation – Gear Inc. over the last several years. Although we operate in opposite time zones, I have the utmost confidence that we’re covered as a support organisation given the exceptional team that Pure Moderation has built and maintained for us. Thank you Pure Moderation!

Pure Moderation has been a game-changer for us. They’ve allowed us to efficiently scale our support operations, ensuring our users receive excellent support. The team is highly-skilled and focused, consistently hitting set SLA’s. I really value how they stay in constant contact with our internal team.

I look forward to continuing our partnership in the future.

I look forward to continuing our partnership in the future.

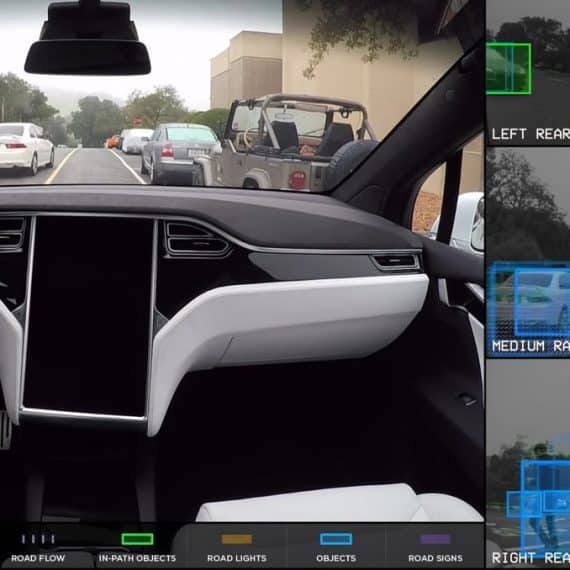

Pure Moderation – Gear Inc.’s innovative technology has made our team more efficient in moderating our community. Incredibly personalised service, helpful and responsive team – we’re glad to be partnered with them!